TLDR

Manufacturing's shift toward edge AI accelerates between 2026 and 2030, driven by real-time quality demands, regulatory pressure on traceability, and falling inference hardware costs. Factories deploying on-premise AI for defect detection, predictive maintenance, and process optimization report 40–70% reductions in unplanned downtime. The transition favors ruggedized, GPU-capable edge platforms over cloud-dependent architectures.

Overview

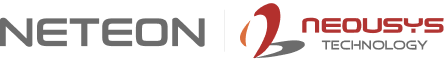

Industrial facilities worldwide processed an estimated 2.3 exabytes of sensor data daily in 2025, yet fewer than 12% of manufacturing organizations deployed AI models at the production line. Cloud-based AI pipelines introduce 150–400ms round-trip latency — unacceptable for applications where a single missed defect costs $50,000 or a 200ms delay causes a robotic collision. As examined in our MIL-STD-810G certification guide, environmental resilience is foundational for production-floor compute. The 2026–2030 window marks the period when three converging trends close this gap: inference hardware reaching price parity with legacy PLCs, regulatory mandates requiring real-time traceability, and pre-trained vision models eliminating the need for in-house ML teams.

Key Trends

Edge-First Architectures Replace Cloud Inference

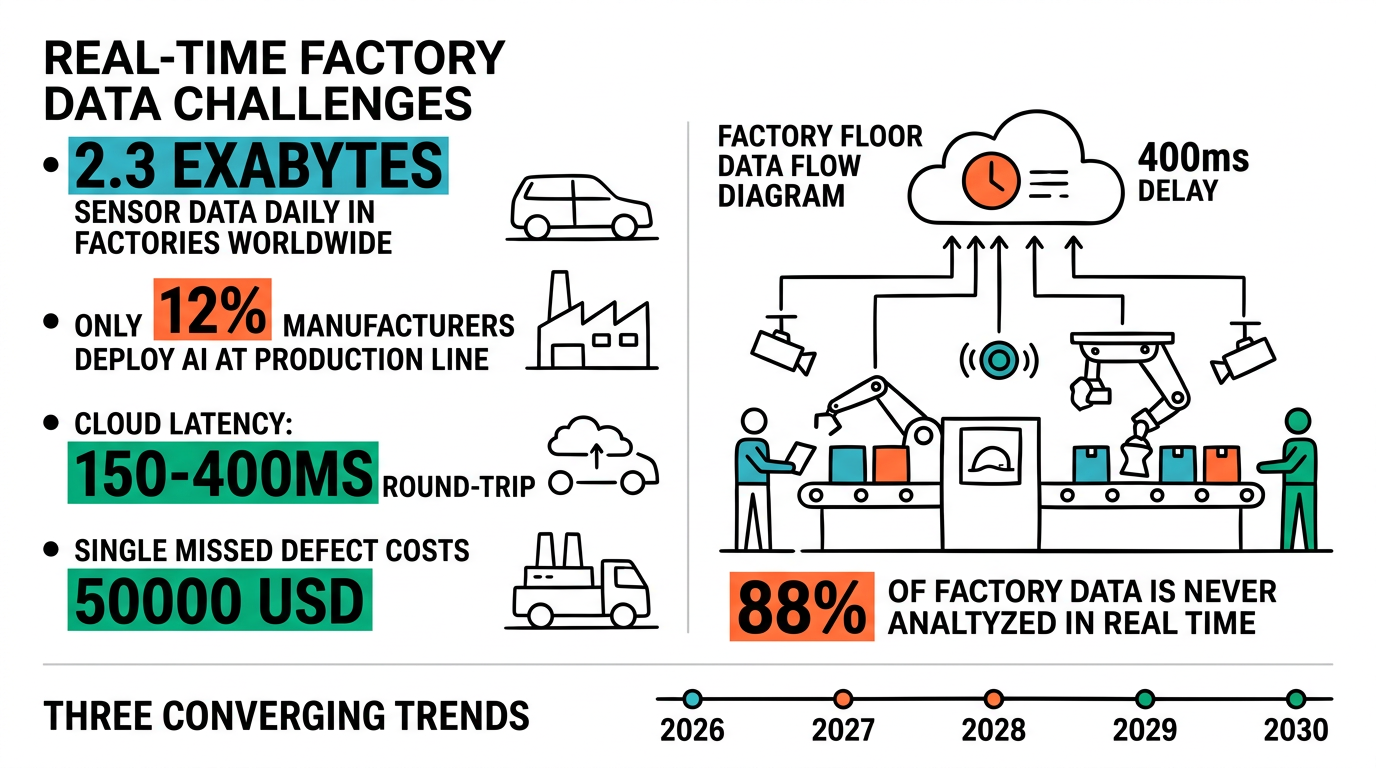

The dominant deployment pattern is shifting. Manufacturers are moving AI models from centralized data centers to ruggedized computers mounted directly on production lines. This eliminates network dependency, reduces inference latency below 25ms, and keeps proprietary process data on-premise. The Nuvo-11000 running Intel Core Ultra 200 delivers up to 32 TOPS of integrated NPU performance in a fanless chassis rated for 24/7 operation at -25°C to 60°C.

Multi-Modal Sensor Fusion Becomes Standard

Single-camera inspection is giving way to fused inputs: visible-spectrum cameras, thermal imaging, ultrasonic sensors, and vibration data processed simultaneously. Edge platforms like the Nuvo-10108GC support NVIDIA RTX GPUs alongside multiple GigE Vision camera inputs, enabling concurrent processing of 4–8 sensor streams at inference speeds under 30ms per frame.

| Capability | Legacy Approach (2023) | Edge AI Standard (2027 Est.) |

|---|---|---|

| Inspection Latency | 200–500ms (cloud) | 15–25ms (edge) |

| Sensor Inputs | 1–2 cameras | 4–8 multi-modal streams |

| Defect Detection Rate | 92–95% | 99.2–99.7% |

| Downtime for Updates | 2–4 hrs (server patch) | <5 min (OTA edge update) |

| Data Residency | Cloud / hybrid | Fully on-premise |

Predictive Maintenance Moves from Pilot to Production

By 2028, an estimated 45% of discrete manufacturers will run predictive maintenance models at the machine level rather than in a central SCADA historian. The shift requires compute platforms that tolerate vibration, dust, and temperature extremes found at the point of maintenance. Similar challenges in fleet vehicle computing demonstrate how fanless edge platforms outperform traditional rack servers in harsh environments.

Digital Thread Traceability Demands Local Compute

Incoming regulatory frameworks mandate per-part traceability across the production lifecycle. Meeting compliance requires real-time data logging, inference results, and audit trails generated at each station — without relying on continuous cloud connectivity.

Impact on Edge Computing

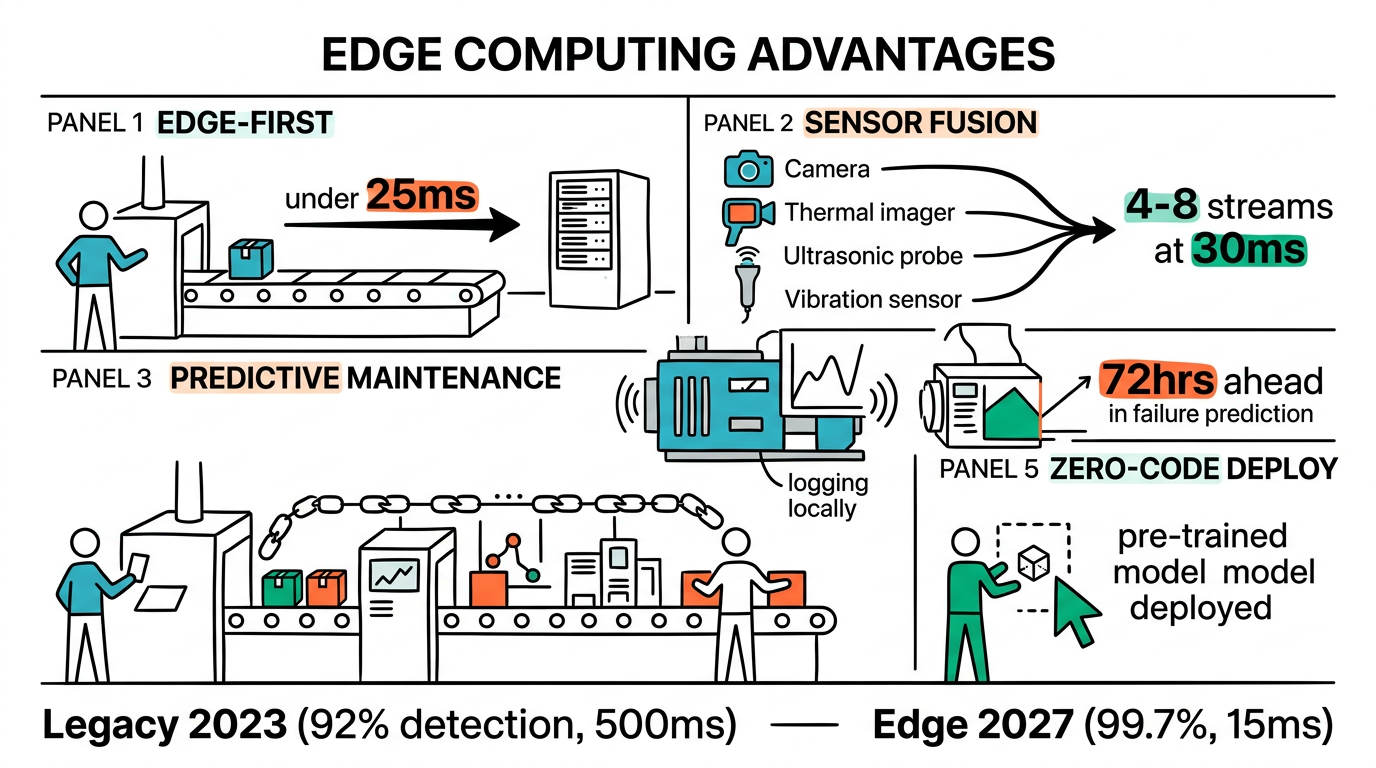

These trends create demand for specific hardware capabilities that cloud infrastructure cannot deliver. GPU/NPU flexibility matters: support for both NVIDIA discrete GPUs and Intel integrated NPU allows matching compute to workload complexity. Environmental tolerance is non-negotiable — fanless thermal design rated for continuous 40–60°C factory ambient operation without derating. I/O density reduces cabling complexity: native GigE Vision, USB3, and PoE ports eliminate external switch dependencies. The Nuvo-11000 and Nuvo-10108GC exemplify this class of platform — combining Intel Core Ultra or 13th-gen processors with optional NVIDIA GPU acceleration in compact, fanless form factors designed for direct production-line mounting.

What to Watch

Over the next 12–18 months, three developments will shape procurement decisions: NVIDIA's next-generation Jetson modules pushing inference-per-watt above 50 TOPS at under 25W, the EU AI Act enforcement timeline creating compliance deadlines for manufacturers selling into European markets, and the maturation of zero-code deployment tools (NVIDIA TAO, Intel OpenVINO Model Zoo) that shift the bottleneck from model training to hardware deployment.

For a detailed platform comparison applied to food processing quality inspection, see Nuvo-9160GC vs Nuvo-10108GC: Selecting GPU Edge AI for Food Processing Line Inspection.

Conclusion

The 2026–2030 manufacturing landscape belongs to organizations that deploy AI inference at the edge rather than routing data through cloud pipelines. Lower hardware costs, stricter regulatory requirements, and mature pre-trained models make the transition both technically feasible and economically compelling. For more insights on edge AI in manufacturing, follow Neteon on LinkedIn. To discuss your edge computing requirements, contact us at www.neteon.net or email [email protected].

FAQs

What latency improvement does edge AI offer over cloud-based inspection?

Edge AI reduces inspection latency from 150–400ms (cloud round-trip) to 15–25ms (local inference). For production lines running 200+ parts per minute, this difference determines whether defective parts are caught and rejected in real time.

Which industries will adopt edge AI in manufacturing fastest?

Automotive and semiconductor manufacturing lead adoption due to zero-defect requirements and existing automation infrastructure. Food/beverage and pharmaceutical follow closely, driven by regulatory traceability mandates under FDA 21 CFR Part 11 and EU AI Act compliance deadlines.

Do manufacturers need in-house ML teams to deploy edge AI?

No. Pre-trained models from NVIDIA TAO and Intel OpenVINO Model Zoo enable deployment without custom training. The bottleneck has shifted from model development to selecting edge hardware with appropriate GPU support, I/O density, and environmental ratings.

What compute performance is needed for multi-camera factory inspection?

A 4-camera visible-spectrum inspection system at 5MP/60fps requires approximately 120–200 TOPS for real-time inference. The Nuvo-10108GC with an NVIDIA RTX GPU delivers this in a fanless form factor rated for continuous factory-floor operation.

How does edge AI address data residency concerns in manufacturing?

Edge AI processes all sensor data and inference locally — no production data leaves the factory network. This satisfies data residency requirements, protects proprietary process IP, and eliminates cloud connectivity as a single point of failure.