TLDR

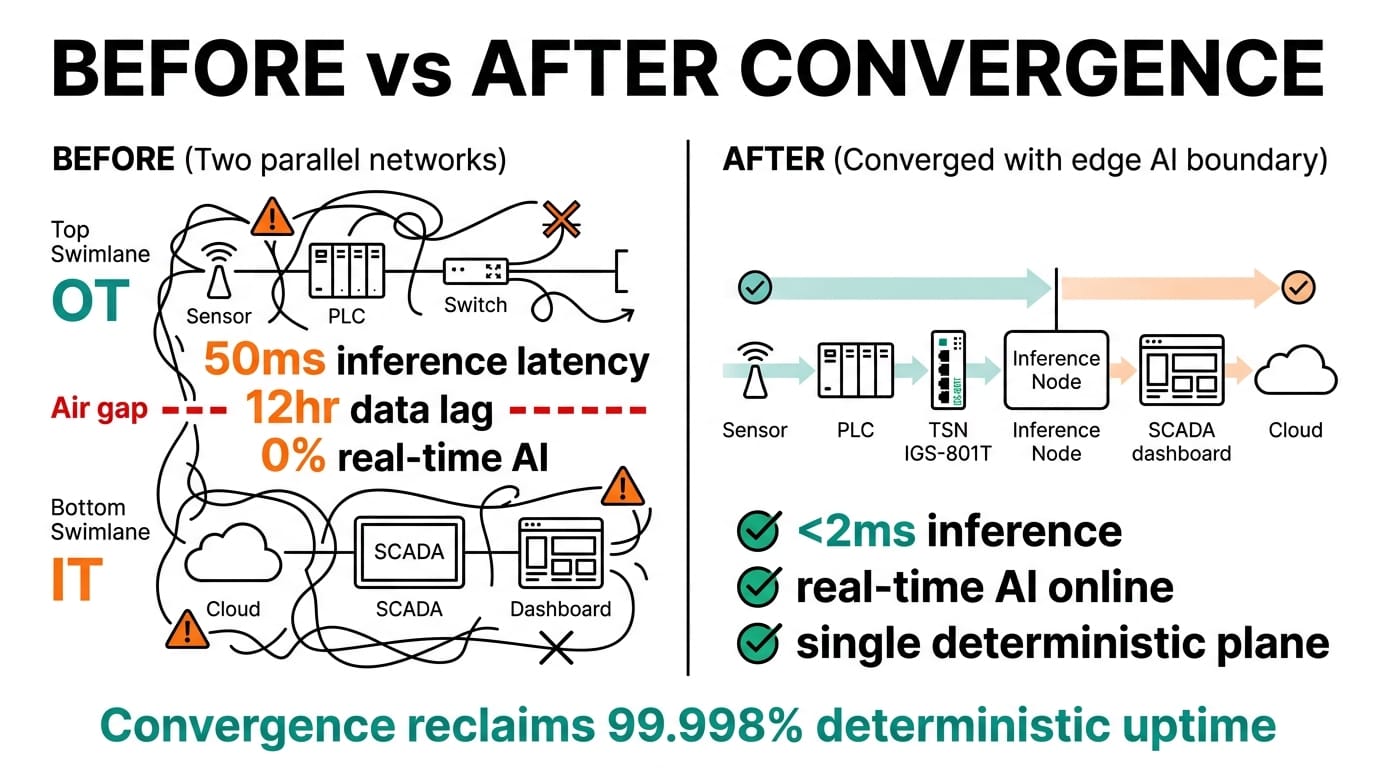

A converged IT/OT network gives an edge AI deployment a single, deterministic data plane that can carry control traffic, sensor telemetry, and inference payloads without the latency and fragility of a separate field bus. This guide shows how to architect that network around a Neousys Nuvo-11000 inference node and PLANET managed switches, with VLAN segmentation, TSN-aware switching, and a clear hand-off between the OT control loop and the IT analytics layer.

Overview

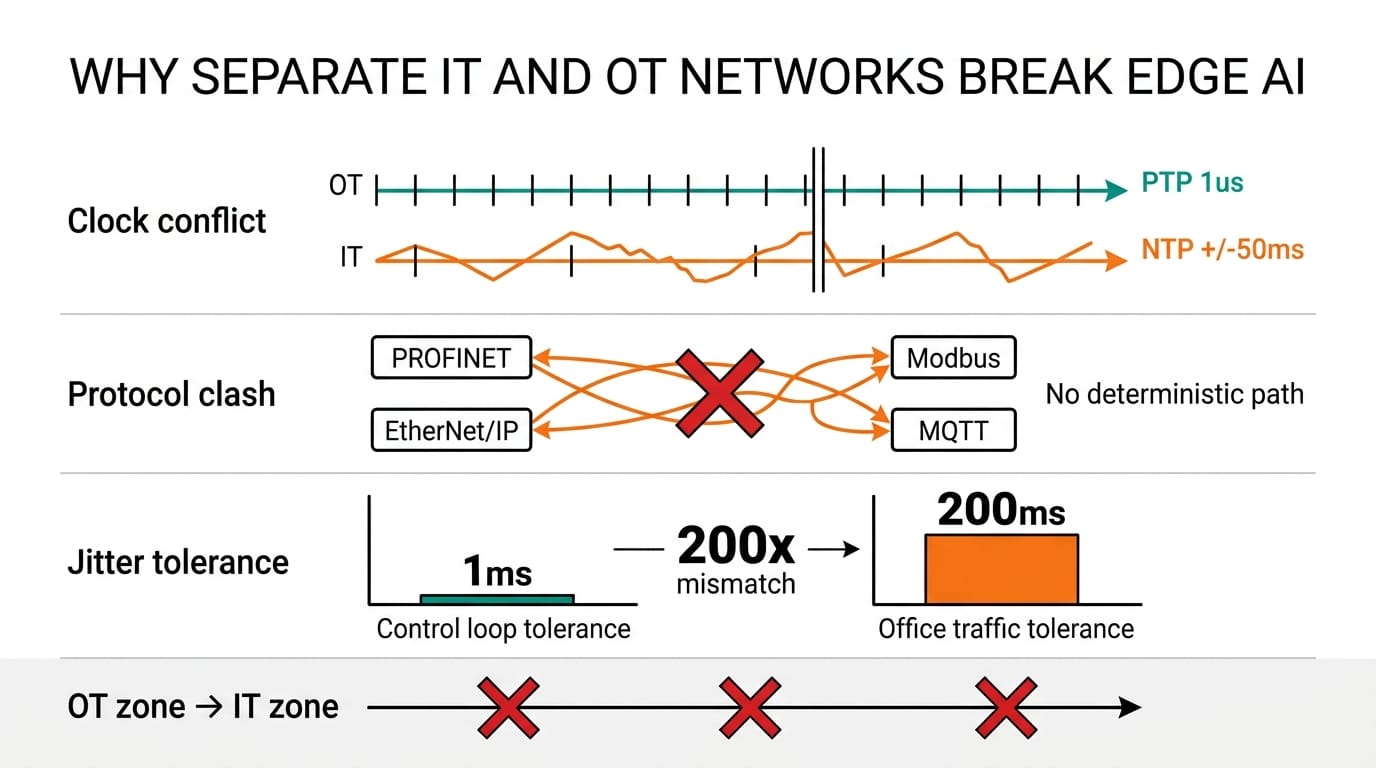

The control side of a plant has its own clock, its own protocols, and its own tolerance for jitter. The IT side wants visibility into all of it. Plumbing them together with a protocol converter and a flat VLAN works for a pilot, but it falls over when an inference workload starts pushing 100 Mbps of camera frames through the same uplink that carries motor commands.

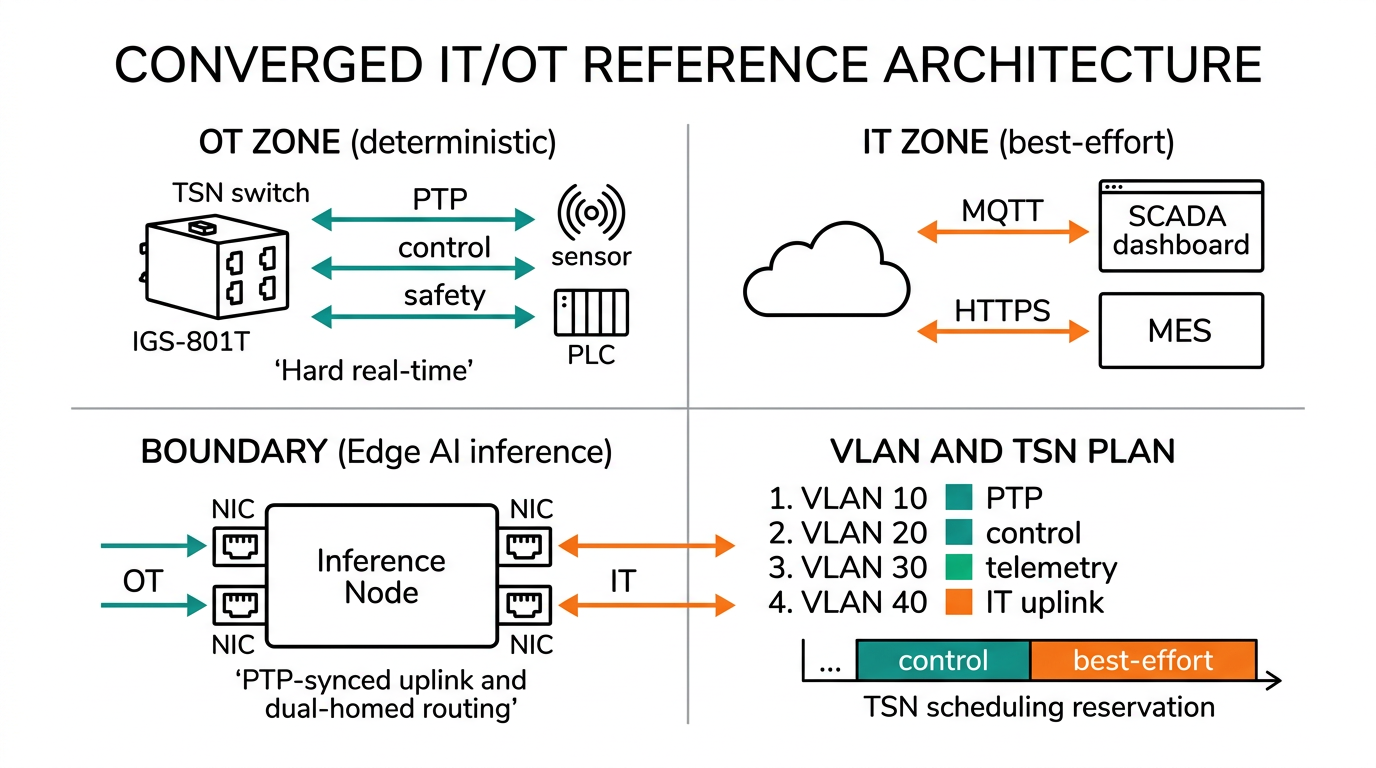

A converged network solves this with a layered architecture: an OT zone that keeps deterministic traffic local, an inference zone where the Nuvo-11000 runs models on de-jittered sensor data, and an IT zone that exports KPIs to MES, historian, and cloud. The earlier guide on what TSN is and why it matters covers the timing primitives this design relies on, and the OPC-UA explainer covers the semantic layer that makes data portable across the boundary. For substation and grid use cases, the pattern is similar to the one outlined in our substation buyer's guide.

System architecture

The reference design has four layers. Each one has a single job and a single class of traffic.

| Layer | Function | Hardware | Protocols | Latency budget |

|---|---|---|---|---|

| Field | Sensors, cameras, PLC I/O | Cameras, ADC modules, drives | EtherCAT, PROFINET, Modbus RTU | Sub-ms |

| OT switching | Deterministic transport | PLANET IGS-801T (8-port industrial GbE) | TSN, IEEE 1588 PTP | <500 µs |

| Inference | Local model execution | Neousys Nuvo-11000 (Intel Core Ultra) | OPC-UA, MQTT, gRPC | 10 to 50 ms |

| IT/uplink | KPI export, remote management | PLANET IGS-10020MT (10-port managed L2) + firewall | HTTPS, MQTT-TLS, SNMP | Best effort |

The Nuvo-11000 sits at the boundary. Two of its Intel I225 NICs go to the OT switch on a PTP-synchronised TSN VLAN. A third NIC goes to the IT uplink. Models read from the OT side, write predictions to OPC-UA tags on the same NIC, and publish summary metrics over MQTT to the IT side. Nothing on the IT VLAN can reach the field bus directly.

For sites that need a heavier inference engine, the Nuvo-10000 drops in with the same NIC layout and an extra PCIe slot for a discrete GPU.

Network design considerations

Three choices decide whether the architecture survives contact with the plant.

VLAN scheme. Use one VLAN per traffic class, not one per cell. A typical mapping is VLAN 10 for PTP and TSN streams, VLAN 20 for OPC-UA, VLAN 30 for camera RTSP feeds, and VLAN 99 for IT management. The Nuvo-11000 trunks all four on one port and routes between them in user space, which keeps the L3 logic auditable.

QoS and bandwidth reservation. TSN scheduling on the IGS-801T reserves a window for control traffic every cycle. Camera feeds get a separate strict-priority queue; everything else falls into best-effort. Without reserved windows, a model deployment that pulls a 200 MB weight file can briefly knock a servo loop offline.

Failure domains. Keep the OT switch and the inference node on the same UPS, and keep the IT uplink on a different one. If the IT side goes down, OT keeps running and the Nuvo-11000 buffers metrics locally. The reverse must also be true: an inference crash cannot stall the field bus.

Build steps

The minimum viable converged stack takes about a day to bring up.

- Configure VLANs 10/20/30/99 on the IGS-801T and the IGS-10020MT, with trunking on the inter-switch link.

- Enable IEEE 1588 PTP on the OT switch and on the two Nuvo-11000 NICs that face it. Confirm offset stays under 1 µs.

- Install the OPC-UA server on the Nuvo-11000. Map the PLC tag set, then expose model outputs as new tags.

- Set up an MQTT broker on the IT side and a one-way bridge from OPC-UA to MQTT for KPI traffic.

- Lock the firewall: only the broker port and SSH on a jump host are reachable from IT to OT.

Validation

Three tests catch most of the things that break.

| Test | Method | Pass criterion |

|---|---|---|

| Determinism | Inject a 100 Mbps camera burst, measure PTP offset on a control NIC | Offset stays under 1 µs |

| Inference latency | Timestamp a sensor sample, log model output time | End-to-end under 50 ms at p99 |

| Failover | Pull the IT uplink for 10 minutes | OT keeps running, MQTT backlog drains on reconnect |

Run these every time the model or firmware changes. A converged network is only as deterministic as its last config push.

Related Products

Conclusion

A converged IT/OT network is less a product than a discipline: VLAN per traffic class, TSN where it is needed, an inference node that respects the timing budget, and a clean firewall between the two sides. Get those right and the Nuvo-11000 plus PLANET switch combination scales from a single cell to a full plant without re-architecting the data plane.

Follow Neteon on LinkedIn for more architecture deep dives, or reach us at [email protected] or www.neteon.net to scope a converged IT/OT pilot for your site.

FAQs

What is a converged IT/OT network?

A single Ethernet fabric that carries deterministic OT control traffic (PTP, TSN, OPC-UA) and IT analytics traffic (MQTT, HTTPS) on segmented VLANs, with an edge inference node like the Nuvo-11000 sitting at the boundary so models can read sensor data on the OT side and publish KPIs on the IT side.

Do I need TSN switches for an edge AI deployment?

You need TSN if a control loop on the same wire as your inference traffic has a sub-millisecond timing budget. For looser cycles, a managed industrial switch like the PLANET IGS-801T with QoS and PTP is usually enough. The deciding factor is whether camera or model traffic can starve a control packet.

How do I keep IT traffic from breaking the OT control loop?

Three things: VLAN per traffic class, strict-priority queuing on the OT switch, and a one-way firewall rule that lets the inference node push KPIs out to IT but blocks any inbound IT traffic from reaching the field bus.

Why use the Nuvo-11000 as the inference node?

It has multiple Intel I225 NICs, which means OT and IT trunks live on physically separate ports. Combined with the Intel Core Ultra package and a fanless enclosure, it can run a model continuously while keeping PTP offset under a microsecond on the OT-facing NICs.

What changes if I need a discrete GPU for inference?

Drop in a Nuvo-10000 instead. Same NIC layout and the same VLAN scheme apply, but the PCIe expansion slot lets you add a workstation-class GPU when the model outgrows iGPU performance.