TLDR

Warehouse AMRs are crossing from "pilot novelty" into the operating backbone of distribution centres. Over the next four years, fleets get bigger, robots take on harder tasks (case picking, mixed-SKU put-away, trailer unload), and the compute moves from a single cabinet PC in the corner to a dedicated edge stack on every robot and every dock. Here is where the technology is going, and what it means for the industrial computer that runs each AMR.

Overview

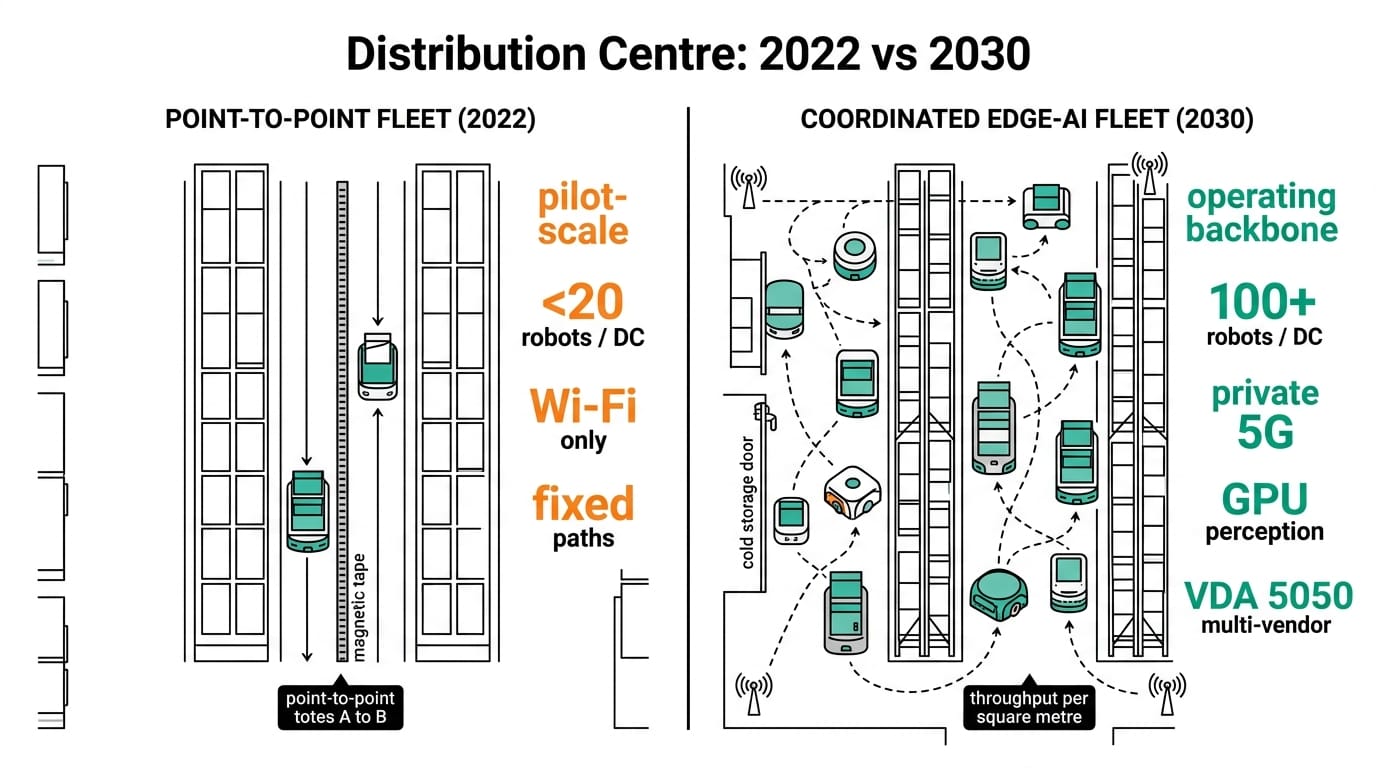

The first wave of warehouse mobile robots was mostly point-to-point. Take a tote from A to B, follow a magnetic strip, dodge a person if you have to. That generation ran fine on modest CPUs and a 2D LiDAR. The next wave is different. A robot is now expected to perceive a 3D scene in real time, classify what it sees, plan around dynamic obstacles, talk to a WMS over MQTT, and stream sensor data back for fleet learning. Without phoning home for inference.

We saw this play out at robot-by-robot scale in our Nuvo-10108GC warehouse AMR case study. It is part of the broader manufacturing arc covered in Edge AI in Manufacturing 2026-2030, and it depends on cleaner protocol plumbing of the kind we walked through in our Modbus-to-MQTT gateway piece for factory AGVs.

Key Trends 2026 to 2030

| Trend | Today (2026) | 2028 inflection | 2030 outlook |

|---|---|---|---|

| Fleet size per DC | 20 to 80 robots | 150 to 300 | 500+ in tier-one DCs |

| Onboard compute per robot | CPU + small NPU | Jetson-class GPU module | Multi-GPU + dedicated vision SoC |

| Perception | 2D LiDAR + bumper | Stereo + 3D LiDAR fusion | Multi-modal: LiDAR, RGB-D, radar, IMU |

| Network | Wi-Fi 5 / 4G | Wi-Fi 6E / private 5G | 5G NR + TSN backhaul |

| Common task mix | Tote and pallet move | Case pick, put-away | Trailer unload, mixed-SKU pick |

| WMS integration | Polled REST | Event-driven MQTT | Bidirectional digital twin |

The headline number most operators care about is throughput per square metre. Industry trackers (Interact Analysis, ARK, LogisticsIQ) consistently project AMR units shipped to roughly triple between 2026 and 2030, and the average robot in 2030 is expected to do meaningfully more work per shift than its 2026 predecessor, mostly because it will see better.

Impact on Edge Computing

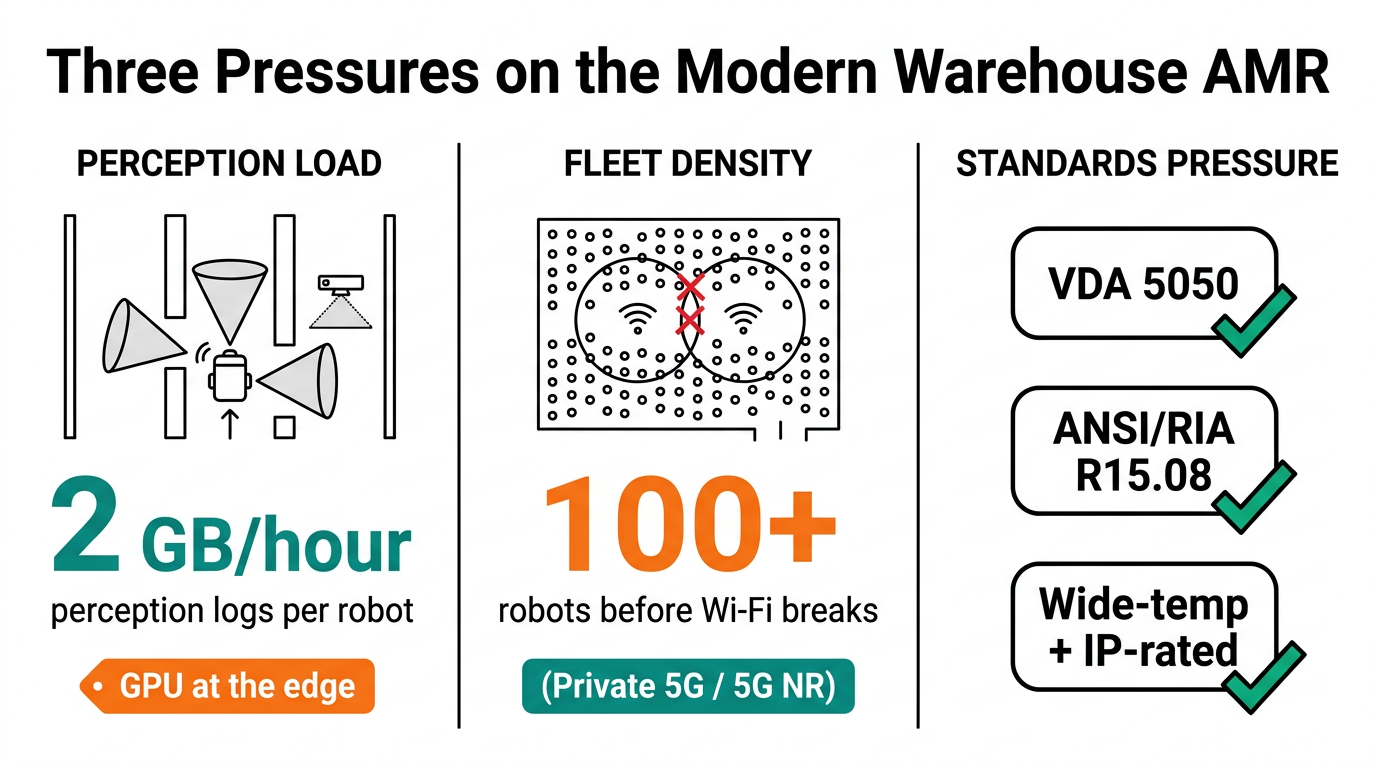

Three pressures land directly on the IPC sitting inside the AMR.

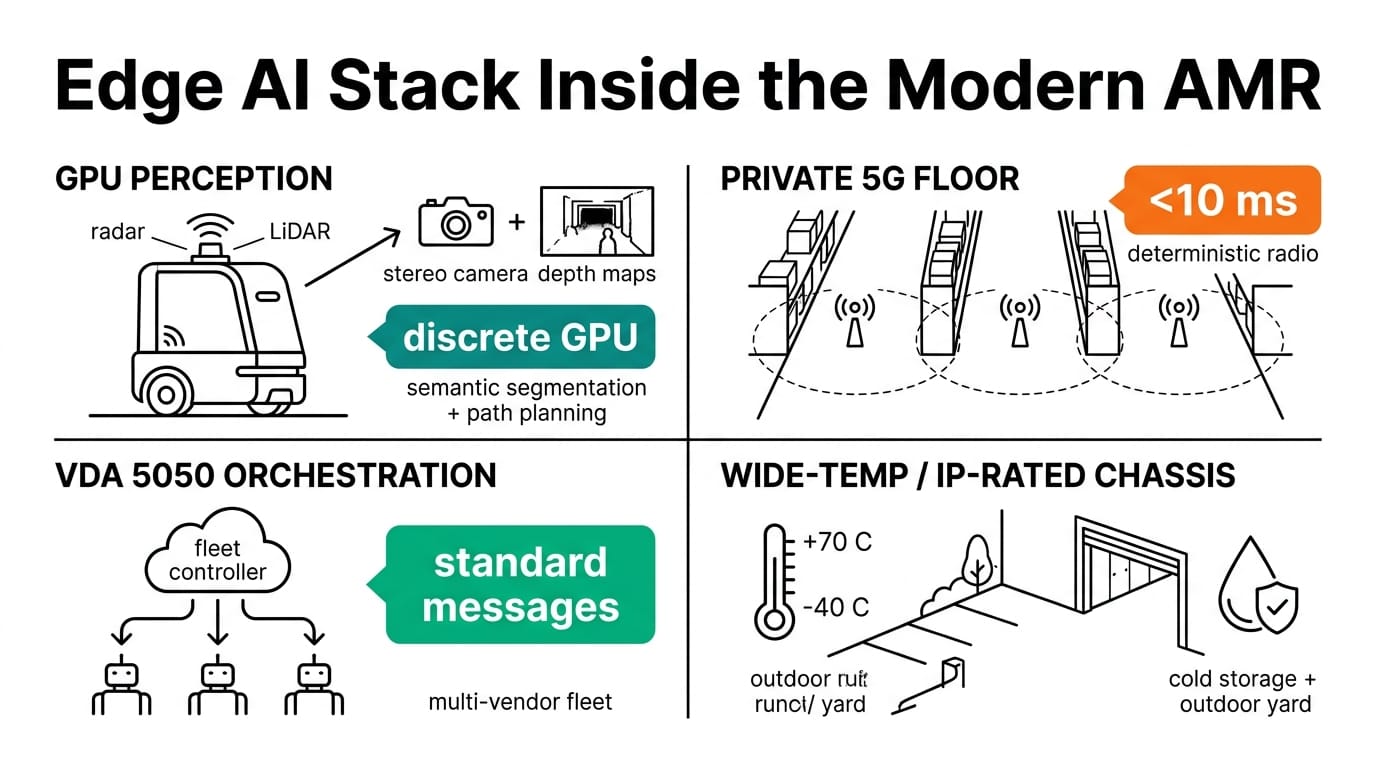

GPU at the edge. Stereo or 3D LiDAR perception, semantic segmentation, and path planning all need a discrete or integrated GPU on the robot itself. Cloud round-trips do not survive the latency budget. A platform like the Nuvo-10108GC with a 350 W RTX-class GPU on a wide DC input and shock-rated mounting becomes a reasonable spec for the heavier robots in a fleet. A fanless NRU-220 based on Jetson Orin fits the smaller, battery-sensitive units that just need solid 30 to 60 TOPS of inference.

Connectivity. Once a fleet crosses about 100 robots, Wi-Fi alone gets brittle. Private 5G or 5G NR becomes attractive for handover and roaming, and that changes the wiring closet at the dock, not just the robot. PoE+ industrial switches rated for 40 to 75 C and dual DC inputs end up as standard equipment at charging stations and LiDAR uplinks.

Data. A modern AMR generates 0.5 to 2 GB per hour of perception logs that are actually useful for retraining. Operators have started keeping a rolling buffer on the robot and pushing deltas back to a fleet manager when the robot returns to its dock. The IPC needs M.2 NVMe headroom and a sane way to do over-the-air model updates.

What to Watch

Standardisation. VDA 5050 is finally seeing real adoption for multi-vendor fleets, which means the IPC has to speak a defined message bus and not a vendor-specific one.

Safety certification. ANSI/RIA R15.08 part 2 (mobile robot safety) is starting to bite during procurement. Compute that can host an SRP/CS-grade safety controller alongside the AI workload, on the same wide-temp chassis, is a real differentiator.

Cold storage and outdoor yard. AMRs are leaving the climate-controlled DC. That is where wide-temp, IP-rated, fanless platforms stop being a nice-to-have.

Conclusion

Warehouse AMRs are being asked to do more, perceive more, and coordinate more, all while the fleet grows. The compute on each robot is the lever that decides whether the operator gets there. Spec the IPC the way you would spec the drivetrain: for the work it actually has to do five years out, not the work you piloted last quarter.

Follow Neteon on LinkedIn, contact www.neteon.net or [email protected].

Related Products

FAQs

How many AMRs will a typical tier-one distribution centre run by 2030?

Industry trackers project tier-one DCs moving from 20 to 80 AMRs in 2026 toward 500-plus by 2030, with a meaningful inflection around 2028 as 150 to 300 robot fleets become normal.

What kind of compute does a 2026-era warehouse AMR actually need?

Two tiers are emerging. Smaller battery-sensitive AMRs do well on a fanless Jetson-class platform like the NRU-220 with 30 to 60 TOPS for vision and SLAM. Heavier robots running stereo or 3D LiDAR fusion plus discrete GPU inference move to a Nuvo-10108GC-class system with a 350 W RTX-class GPU on a wide DC input.

When does a warehouse fleet outgrow Wi-Fi?

Operators tend to feel it around the 100-robot mark. Roaming and handover get brittle, and that is when private 5G or 5G NR becomes the practical option. The wiring closet at the dock gets upgraded too, usually to wide-temp PoE+ industrial managed switches with dual DC inputs and SFP uplinks for charging-station LiDAR and Wi-Fi APs.

What standards should AMR procurement teams pay attention to?

VDA 5050 for multi-vendor fleet messaging is the big one, ANSI/RIA R15.08 part 2 for mobile robot safety is the second. Both increasingly show up as procurement requirements rather than nice-to-haves.

Why does the AMR's onboard storage and update path matter?

A modern AMR generates 0.5 to 2 GB per hour of perception logs that feed back into model retraining. That requires M.2 NVMe headroom on the IPC plus a clean over-the-air model update path so new weights can be pushed to the fleet without truck rolls.